The Real Problem With Most Soccer Betting Tips

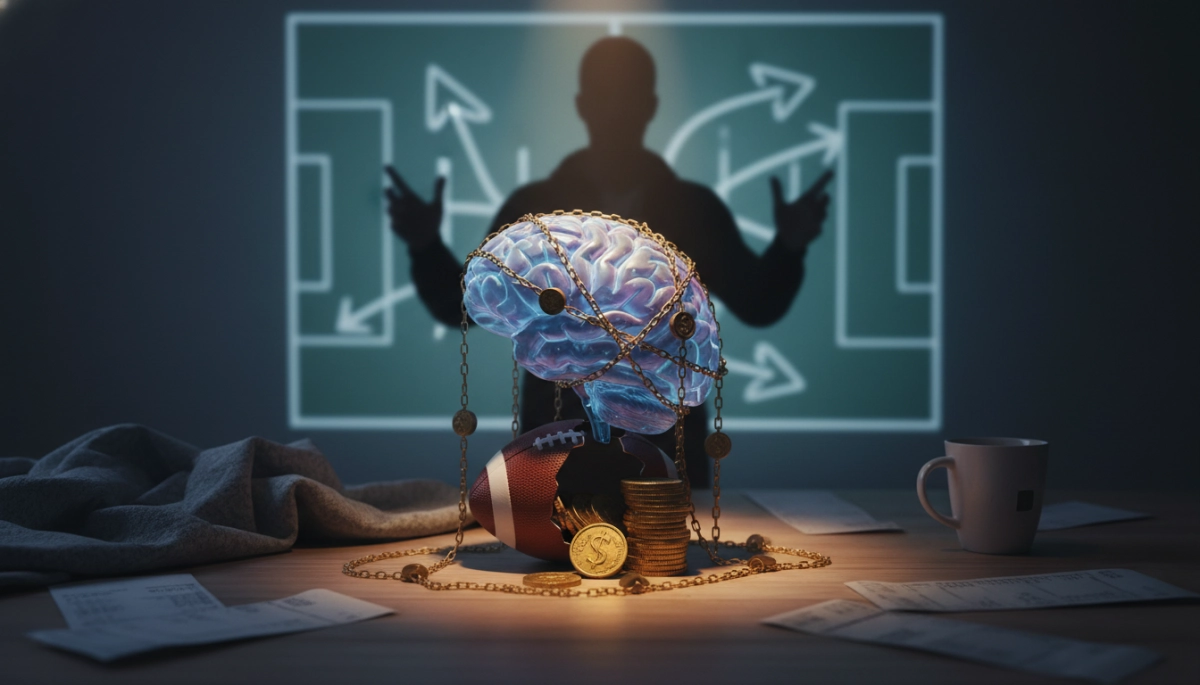

Most bettors don’t lose because they back the wrong teams. They lose because they accept reasoning that sounds convincing without interrogating whether it actually holds up. A tip arrives — from a forum, a social media account, or their own analysis — and if it feels right, it gets backed. That feeling is where the money goes.

Evaluating soccer betting tips critically isn’t about cynicism. It’s about applying consistent standards to the reasoning behind a selection, whether that reasoning came from an external tipster or your own pre-match research. The process is the same. The discipline required is the same. And the stakes are real either way.

Why Surface-Level Reasoning Passes the First Test So Easily

Weak analysis is remarkably good at disguising itself. It arrives dressed in plausible language — recent form, head-to-head records, home advantage — without real interrogation of what those factors mean in context. A tipster who notes that a team has won four of their last five home matches isn’t necessarily wrong, but that observation alone tells very little about actual value.

The question that separates surface reasoning from genuine insight is: why does this information give an edge over the market price? Bookmakers price millions of matches annually. Their models are sophisticated, continuously updated, and shaped by the same publicly available data most analysts use. If a tip is based entirely on information the market already knows, it’s not analysis — it’s repetition with a conclusion attached.

A useful illustration: during the 2022–23 Premier League season, several widely followed tipsters consistently recommended high-profile clubs on the basis of squad quality and league position alone. That approach ignored how efficiently those clubs’ odds had already been compressed by public money and market movement. Backing short-priced favourites because they’re good teams is one of the most common ways recreational bettors systematically erode their bankroll.

Recency Bias Is the Sharpest Edge in the Wrong Direction

Recency bias is arguably the single most corrosive cognitive distortion in football betting. It causes bettors and tipsters alike to overweight recent results and underweight the broader statistical sample that better predicts future performance. A team that has won three matches in a row looks like momentum; statistically, it may simply be variance returning to mean.

This is especially pronounced in congested fixture schedules. A side recording back-to-back clean sheets might appear defensively solid, but if those performances came against bottom-half opposition and underlying expected goals data suggests the goalkeeper made several low-probability saves, the picture shifts considerably.

Critically evaluating any tip requires asking whether the reasoning would still hold if the last three results were removed. If the entire case for a selection collapses without those results, recency bias is doing most of the analytical work. That’s not a framework — it’s pattern-matching dressed up as expertise.

Building a Structured Framework for Tip Evaluation

A framework isn’t a checklist completed mechanically before placing a bet. It’s a set of interrogative habits that become second nature, forcing every tip through the same scrutiny. The goal is to establish whether the reasoning behind a selection is genuinely predictive or merely plausible.

It starts with one foundational question: what is the core assumption underpinning this tip, and is there evidence the market has not fully priced it in? Every legitimate betting edge rests on an inefficiency — something the analyst knows, weighs differently, or interprets more accurately than the consensus. If that inefficiency cannot be articulated clearly, the tip has no foundation worth building on.

From there, a structured evaluation moves through several distinct layers:

- Source quality: Is the reasoning backed by data, or primarily narrative? A tipster citing expected goals differentials or pressing intensity metrics is working from a different evidential base than one referencing atmosphere or gut instinct.

- Sample size: Does the supporting evidence draw from a meaningful number of observations? Five games is not a trend. It is noise with good marketing.

- Market context: Has the line moved significantly since opening? Sharp line movement signals value being identified. A line that hasn’t moved on a heavily backed team can indicate the market is absorbing public money without adjusting — which tells its own story.

- Situational variables: Are there fixture-specific factors — rotation risk, travel fatigue, squad depth — that meaningfully alter the probability beyond what league-level data captures?

Each layer functions as a filter. A tip that clears all four doesn’t guarantee a winning bet, but it means the decision rests on something more durable than a feeling or a hot streak.

Separating Tipster Credibility From Tipster Confidence

Confidence and credibility frequently diverge. The most visible tipsters — those with the largest audiences and the most emphatic language — are not necessarily the most analytically rigorous. Confidence is a performance. Credibility is built from documented, verifiable results over a meaningful sample.

When evaluating a tipster’s output, examine their historical record — but not simply their strike rate. Strike rate without knowing the average odds is almost meaningless. A tipster recording 55% wins on selections priced around 1.50 is operating at a loss. A tipster winning 38% of bets at average odds of 3.20 is producing meaningful returns. The mathematics of expected value doesn’t care how compelling the analysis reads.

What Verified Track Records Actually Reveal

Verified track records — documented through third-party platforms rather than self-reported — expose patterns that selective posting conceals. Most importantly, they reveal variance. A tipster with two profitable months may simply be riding normal variance within a break-even strategy. The question isn’t whether they’re winning right now; it’s whether long-run results suggest genuine edge or fortunate timing.

It’s also worth watching how a tipster handles losing runs. Analysts with genuine methodology explain losses in terms of process — what the model suggested versus what happened on the pitch — rather than blaming bad luck or referees. When a tipster responds to a losing sequence by increasing posting volume or shifting statistical indicators, that is a signal worth noting. Methodology shouldn’t be retrofitted to results.

How Self-Generated Tips Fail in Different Ways

Bettors who do their own research are not immune to the same failures — in some respects, they are more exposed. When a person generates their own tip, there is no external voice to question the reasoning. Confirmation bias operates without friction, beginning with the conclusion and working backward through the evidence.

This is particularly common in markets where a bettor has strong prior opinions — clubs they follow closely or matchups with historical significance. Familiarity breeds a particular kind of analytical overconfidence. Knowing a club’s squad deeply can create the illusion of informational edge when the market has already absorbed that knowledge through sharper, more systematic channels.

The practical corrective is to treat self-generated tips with the same skeptical distance as any external source. Writing the reasoning down before checking the odds forces genuine analytical commitment — removing the ability to unconsciously shape the narrative around a price that already looks attractive. If the written reasoning would still justify the bet at slightly different odds, the analysis is doing real work. If it only makes sense at the current price, the odds may be driving the thinking rather than the other way around.

Applying the Standard Consistently — and Then Trusting It

The framework only has value if applied without exception. That means running a tip from a trusted source through the same scrutiny as one from an unfamiliar account, and subjecting a self-generated selection that feels exceptionally strong to the same interrogation as one that feels uncertain. Selective application is not discipline — it is confirmation bias wearing the costume of process.

Consistent application reveals something useful over time: most tips, whether external or self-generated, fail somewhere within the framework. The core assumption is underspecified, the sample size is too small, the market has already moved, or the situational variables don’t meaningfully alter the probability. Recognising that most selections don’t clear every filter is not a cause for paralysis — it is the framework working as intended. Fewer, better-reasoned bets placed with genuine conviction is a more viable long-term approach than a high volume of tips that felt good in the moment.

This is the aspect of critical evaluation most bettors find genuinely difficult, because it requires accepting inaction as a legitimate output. Walking away from a match because the reasoning doesn’t hold is not a failure of nerve. It is the clearest possible sign that the process is actually running, rather than simply being performed.

For those who want to ground this approach in a more formal understanding of probability and market efficiency, the academic literature on overconfidence in judgment under uncertainty provides a rigorous foundation for understanding why human intuition fails so systematically in probabilistic environments — and why structured evaluation exists as the corrective.

The sharpest bettors are not those who find more tips. They are those who have built the habit of asking harder questions about the tips they already have — and who have developed the discipline to act only when the answers are genuinely satisfactory. That standard, applied consistently over time, is what separates analytical betting from sophisticated-sounding guesswork.